Add github action to automatically push to pypi on Release x.y.z commit (#681)

* Add github action to automatically push to pypi on Release x.y.z commit * some housekeeping for pypi upload * add version.py Co-authored-by: Jong Wook Kim <jongwook@nyu.edu>

This commit is contained in:

37

.github/workflows/python-publish.yml

vendored

Normal file

37

.github/workflows/python-publish.yml

vendored

Normal file

@@ -0,0 +1,37 @@

|

||||

name: Release

|

||||

|

||||

on:

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

jobs:

|

||||

deploy:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v2

|

||||

- uses: actions-ecosystem/action-regex-match@v2

|

||||

id: regex-match

|

||||

with:

|

||||

text: ${{ github.event.head_commit.message }}

|

||||

regex: '^Release ([^ ]+)'

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: '3.8'

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

pip install setuptools wheel twine

|

||||

- name: Release

|

||||

if: ${{ steps.regex-match.outputs.match != '' }}

|

||||

uses: softprops/action-gh-release@v1

|

||||

with:

|

||||

tag_name: v${{ steps.regex-match.outputs.group1 }}

|

||||

- name: Build and publish

|

||||

if: ${{ steps.regex-match.outputs.match != '' }}

|

||||

env:

|

||||

TWINE_USERNAME: __token__

|

||||

TWINE_PASSWORD: ${{ secrets.PYPI_API_TOKEN }}

|

||||

run: |

|

||||

python setup.py sdist

|

||||

twine upload dist/*

|

||||

@@ -1,3 +1,6 @@

|

||||

include requirements.txt

|

||||

include README.md

|

||||

include LICENSE

|

||||

include whisper/assets/*

|

||||

include whisper/assets/gpt2/*

|

||||

include whisper/assets/multilingual/*

|

||||

|

||||

12

README.md

12

README.md

@@ -2,7 +2,7 @@

|

||||

|

||||

[[Blog]](https://openai.com/blog/whisper)

|

||||

[[Paper]](https://arxiv.org/abs/2212.04356)

|

||||

[[Model card]](model-card.md)

|

||||

[[Model card]](https://github.com/openai/whisper/blob/main/model-card.md)

|

||||

[[Colab example]](https://colab.research.google.com/github/openai/whisper/blob/master/notebooks/LibriSpeech.ipynb)

|

||||

|

||||

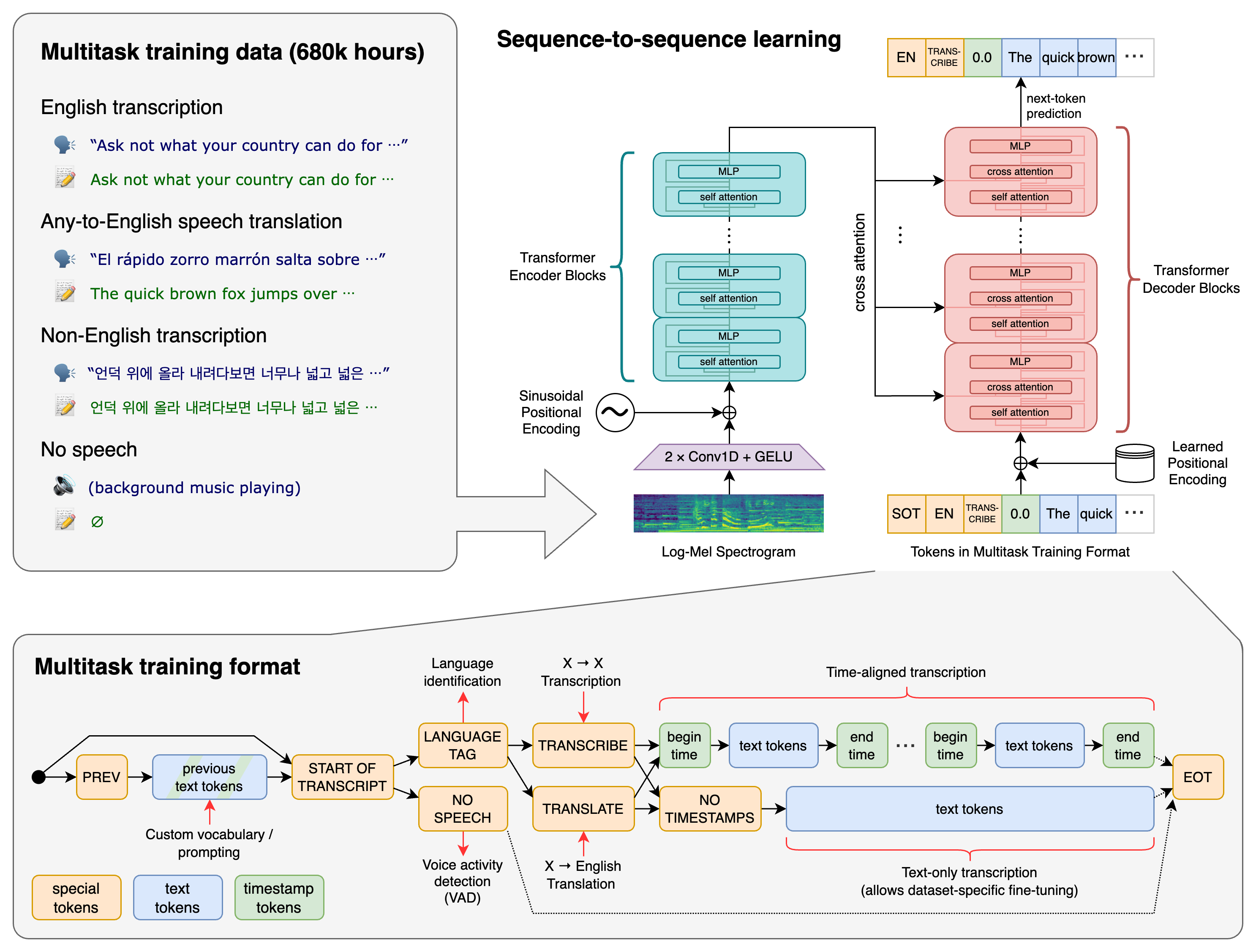

Whisper is a general-purpose speech recognition model. It is trained on a large dataset of diverse audio and is also a multi-task model that can perform multilingual speech recognition as well as speech translation and language identification.

|

||||

@@ -10,14 +10,14 @@ Whisper is a general-purpose speech recognition model. It is trained on a large

|

||||

|

||||

## Approach

|

||||

|

||||

|

||||

|

||||

|

||||

A Transformer sequence-to-sequence model is trained on various speech processing tasks, including multilingual speech recognition, speech translation, spoken language identification, and voice activity detection. All of these tasks are jointly represented as a sequence of tokens to be predicted by the decoder, allowing for a single model to replace many different stages of a traditional speech processing pipeline. The multitask training format uses a set of special tokens that serve as task specifiers or classification targets.

|

||||

|

||||

|

||||

## Setup

|

||||

|

||||

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.7 or later and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [HuggingFace Transformers](https://huggingface.co/docs/transformers/index) for their fast tokenizer implementation and [ffmpeg-python](https://github.com/kkroening/ffmpeg-python) for reading audio files. The following command will pull and install the latest commit from this repository, along with its Python dependencies

|

||||

We used Python 3.9.9 and [PyTorch](https://pytorch.org/) 1.10.1 to train and test our models, but the codebase is expected to be compatible with Python 3.7 or later and recent PyTorch versions. The codebase also depends on a few Python packages, most notably [HuggingFace Transformers](https://huggingface.co/docs/transformers/index) for their fast tokenizer implementation and [ffmpeg-python](https://github.com/kkroening/ffmpeg-python) for reading audio files. The following command will pull and install the latest commit from this repository, along with its Python dependencies:

|

||||

|

||||

pip install git+https://github.com/openai/whisper.git

|

||||

|

||||

@@ -68,7 +68,7 @@ For English-only applications, the `.en` models tend to perform better, especial

|

||||

|

||||

Whisper's performance varies widely depending on the language. The figure below shows a WER (Word Error Rate) breakdown by languages of Fleurs dataset, using the `large-v2` model. More WER and BLEU scores corresponding to the other models and datasets can be found in Appendix D in [the paper](https://arxiv.org/abs/2212.04356). The smaller is better.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -90,7 +90,7 @@ Run the following to view all available options:

|

||||

|

||||

whisper --help

|

||||

|

||||

See [tokenizer.py](whisper/tokenizer.py) for the list of all available languages.

|

||||

See [tokenizer.py](https://github.com/openai/whisper/blob/main/whisper/tokenizer.py) for the list of all available languages.

|

||||

|

||||

|

||||

## Python usage

|

||||

@@ -140,4 +140,4 @@ Please use the [🙌 Show and tell](https://github.com/openai/whisper/discussion

|

||||

|

||||

## License

|

||||

|

||||

The code and the model weights of Whisper are released under the MIT License. See [LICENSE](LICENSE) for further details.

|

||||

The code and the model weights of Whisper are released under the MIT License. See [LICENSE](https://github.com/openai/whisper/blob/main/LICENSE) for further details.

|

||||

|

||||

18

setup.py

18

setup.py

@@ -3,11 +3,19 @@ import os

|

||||

import pkg_resources

|

||||

from setuptools import setup, find_packages

|

||||

|

||||

|

||||

def read_version(fname="whisper/version.py"):

|

||||

exec(compile(open(fname, encoding="utf-8").read(), fname, "exec"))

|

||||

return locals()["__version__"]

|

||||

|

||||

|

||||

setup(

|

||||

name="whisper",

|

||||

name="openai-whisper",

|

||||

py_modules=["whisper"],

|

||||

version="1.0",

|

||||

version=read_version(),

|

||||

description="Robust Speech Recognition via Large-Scale Weak Supervision",

|

||||

long_description=open("README.md", encoding="utf-8").read(),

|

||||

long_description_content_type="text/markdown",

|

||||

readme="README.md",

|

||||

python_requires=">=3.7",

|

||||

author="OpenAI",

|

||||

@@ -20,9 +28,9 @@ setup(

|

||||

open(os.path.join(os.path.dirname(__file__), "requirements.txt"))

|

||||

)

|

||||

],

|

||||

entry_points = {

|

||||

'console_scripts': ['whisper=whisper.transcribe:cli'],

|

||||

entry_points={

|

||||

"console_scripts": ["whisper=whisper.transcribe:cli"],

|

||||

},

|

||||

include_package_data=True,

|

||||

extras_require={'dev': ['pytest']},

|

||||

extras_require={"dev": ["pytest"]},

|

||||

)

|

||||

|

||||

@@ -12,6 +12,7 @@ from .audio import load_audio, log_mel_spectrogram, pad_or_trim

|

||||

from .decoding import DecodingOptions, DecodingResult, decode, detect_language

|

||||

from .model import Whisper, ModelDimensions

|

||||

from .transcribe import transcribe

|

||||

from .version import __version__

|

||||

|

||||

|

||||

_MODELS = {

|

||||

|

||||

1

whisper/version.py

Normal file

1

whisper/version.py

Normal file

@@ -0,0 +1 @@

|

||||

__version__ = "20230117"

|

||||

Reference in New Issue

Block a user